Have you met... James Bridle

Have you met... James Bridle

James Bridle's work engages with technological developments, software programming and network infrastructure, culminating in visual mappings of these systems and their impacts and interactions within the social and cultural spheres. We spoke with him about his exhibition, ‘Failing to distinguish between a tractor trailer and the bright white sky,’ currently on view at Nome.

Let’s start with the exhibition title. It was taken from an accident report involving a self-driving automobile. What drew you to this particular fragment of text?

It’s the strangeness of the line itself, which is a pure description. It’s something so alien. It’s so obviously a product of a non-human intelligence. For us, the difference between the sight of a truck and the open sky is a visceral one, and yet a machine completely fails to distinguish between those two things. But it’s also underlining why that’s a problem: the person driving the car should have remained in control. One of the reasons I’m interested in self-driving cars is because it’s this technology that still feels incredibly futuristic, and yet it’s already here. It’s not perfect yet, which is why if you’re driving one of these cars, you’re supposed to keep your hands on the wheel at all times. The guy driving this car trusted his vehicle so much, that he was sitting there watching a movie while his car plowed into a truck at 120 km per hour. I’m fascinated by why we would choose to give up our agency and autonomy in ways that might be existentially threatening to us, in return for convenience or this utter belief in the superiority of the technology.

How and when did you become interested in exploring this subject?

I’m always interested in any technology that feels incredibly futuristic and yet sort of boring at the same time. The thing is, when things make that transition really quickly, we don’t actually have any point at which we think through the consequences. And there are massive consequences for these.

The two main threads that are most interesting are, on a direct level, the fact that robots are going to take most jobs within the next 10-20 years. We have no plan for what happens at that point. In fact, we’re actively destroying the entire social framework to have made that possible. There’s a direct connection between automation and the destruction of the social fabric. But there’s a more romantic notion as well. There are obvious, massive blessings to self-driving cars: they’re going to reduce accident rates; they’re going to be better than humans at driving. But for a long time, driving has stood in for a certain kind of individual freedom: the ability to be self-directed, the beauty of the open road. I’m one of those contradictory people who think cars are evil but love driving. So, we’re choosing to automate this thing that we enjoy, as people. Those are reasons why the figure of the self-driving car becomes really interesting to me.

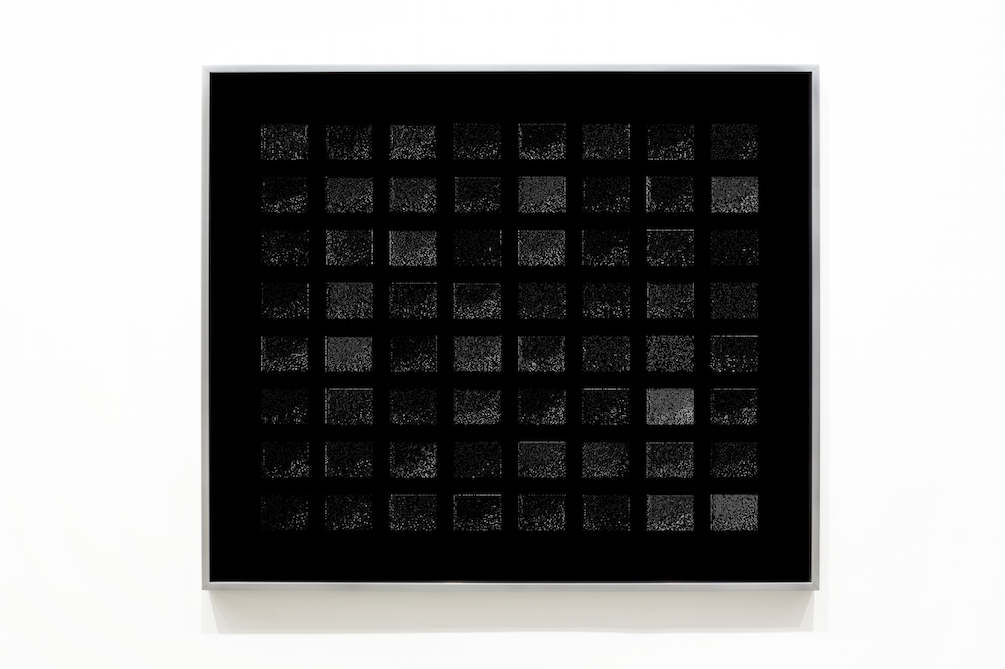

Could you talk about the Activation series of prints? What are the images of, exactly? What is it that's being activated?

To activate is a term from the software, which means to poke it and see how it functions. Because one of the other things that’s fascinating about this process is the kind of intelligence that’s being built into cars and other intelligent agents. We’ve started to build what’s called machine learning systems, which are blank slates that spend time in the world, learning about it. That’s how I built the software that would drive my car. That’s how it works now: this blank slate that you train. But it has a side effect, which is that we have no idea why it makes the decisions that it does. The distant layers, the deeper parts of the software program where the decision’s made, are not accessible to humans.

What I’m doing in the Activations is illustrating the different way in which machines ‘see’ the world. I put ‘see’ in quotes, because machines don’t see through eyes like we do – they see the world as data. As you move through the layers of the network, you move from a view of the road as a human would understand it, to various levels of increasing abstraction. That word abstraction is key, because it’s visual abstraction, but you’re also abstracting information. You’re moving from a human perspective to a machine perspective. At the end of that process is something that humans can’t visually understand, but that is always in a process of negotiation.

You talked a bit about working on the self-driving car, but what else can you tell us about the process of researching and testing it?

Practically, what it meant was creating a neural network and building enough data to train it. I put cameras on a car; I drove around recording hours of data-cam footage of the road ahead, and hours of data about my driving. I then had to build an Android app for my phone, which I stuck onto the steering wheel so that it registers its angle. The end result is you find yourself atop a mountain in the middle of Greece. The best thing about this is not that I built a self-driving car, it’s that I had weeks of beautiful exploration.

The video that’s shown in this show is of me being directed up Mount Parnassus by the self-driving car – by its software. There’s a steering wheel you can see in the video, which is the computer’s prediction of where it thinks it should drive. Of course, I’m also driving up Mount Parnassus, which opens up a whole bunch of questions. That’s what I explore in that video.

What about this relationship between mythology and technology that you refer to? Where do these intersect for you?

For me, it’s always been there. But I felt it came to the fore in my last project, Cloud Index. It was a project about how we think about technology, and that it feels like purely technological thinking is not enough to describe or deal with the world that we live in – a world that is shown by technology to be ever more complex. Mythology gives us strategies for things we haven’t encountered before. The key thing about mythology is not just that they’re old stories, but that they’re constantly retold and updated, and they embody certain principles that are applicable across wide situations. They also describe non-human situations, which is something we’re realising needs to be talked about in the context of machines. On the one hand, they’re built by people and reify and reproduce the politics of the people who built them. There are always humans in the machine, somewhere. Except, there increasingly aren’t. Even if they don’t have the kind of agency that a human has, they have a kind of agency. They begin to affect the world irrespective of their creator’s intentions. A history of storytelling that acknowledges non-human agency is important at this point.

Is programming and building a self-driving car something you are interested in exploring further?

I think at this point, after 6 months, I’ve probably learned everything I needed to learn by building it. I’ve contributed back to the project in my attempt to open source software, so I’ve made tools so that other people could pick it up and take this further. I think for me, the thinking I needed to do around this subject has mostly been done. But we’ll see.

So, what’s next?

What’s next is a book about much of these same kinds of things. So, I’m back to writing for the next stages, and seeing what comes out of that.

*****

James Bridle, "Failing to distinguish between a tractor trailor and the bright white sky" 22 Apr 2017 – 29 Jul 2017 Nome more info